Now we’ve got Alexa talking to our mirror, it’s time to go one step further. I want to ask Alexa to show a picture of someone or something on the mirror when I ask it to.

I’ll be doing this in a few steps.

- I want to be able to do this in the Debug hub. Period. No voice interaction or anything.

- I want to be able to ask Alexa to show a picture by sending the command on to the mirror, and let the mirror search for an image. In this scenario Alexa won’t respond with audio (or at least nothing meaningful), but only send the command.

- The last scenario is let Alexa search for an image, and have it explain something to me. In this scenario I want to be able to make a distinction between only visual feedback or both audio and visual feedback.

The scenario whereby there is only audio feedback already exists : that’s the Alexa service itself.

So let’s get cracking. The first thing to decide is which service to use to get images. There are a few options. The first one that comes to mind is Google : the custom search API does something like that. More information here. That’s cool. You get 100 free API calls per day. That’s 3100 in a long month.

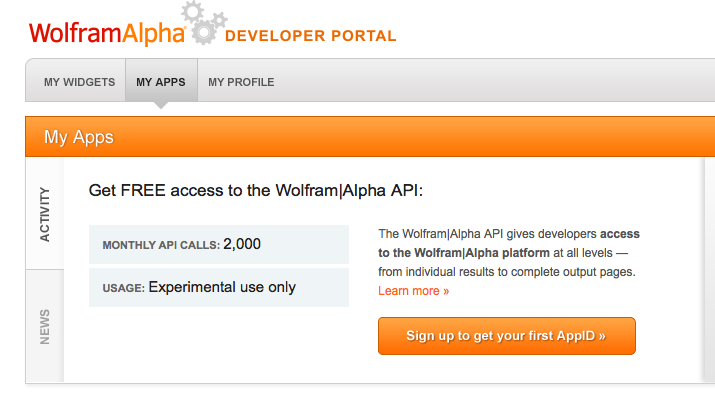

An alternative is Wolfram Alpha. It is very similar, but you “only” get 2000 calls per month. I kind of like this one because it gives you a lot of info, and days with a lot of calls can compensate for days with little calls.

Anyway. I might make an alternative for both, and combine them. Let’s see.

So let’s start with Wolfram Alpha. You can get started with API development here.

It’s quite straight forward. Just register with Wolfram Alpha as a user, and next just create a new application.

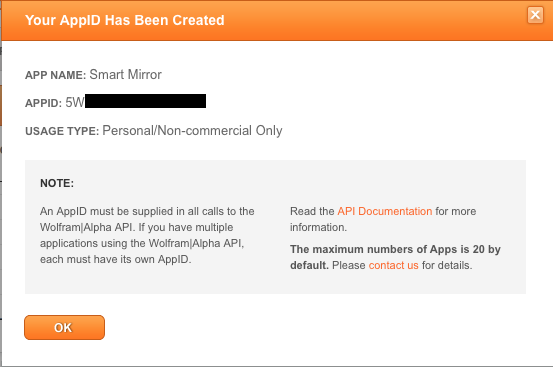

Just give it a name and a description.

And there you have it.

Theoretically you could make multiple APP ID’s. And hence increase the number of calls you can make. However, it clearly states the maximum number of apps is 20. I could make one for the debug hub, the mirror and Alexa separately actually. But let’s start with a single one.

Next thing to do is extend the debug hub. An extra tab at the left hand side, with a query text area and a button.

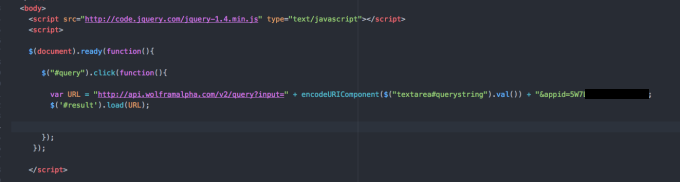

Writing the code is pretty easy. Look at the extract below.

The only thing it does is construct an URL and concatenate the value of the text area in, followed by the App ID. Referencing the text area is done via jQuery. The text area element is called “querystring”. The encodeURIComponent takes care of encoding the URL. Spaces get replaced with %20 and other specific not allowed characters in an URL get replaced as well.

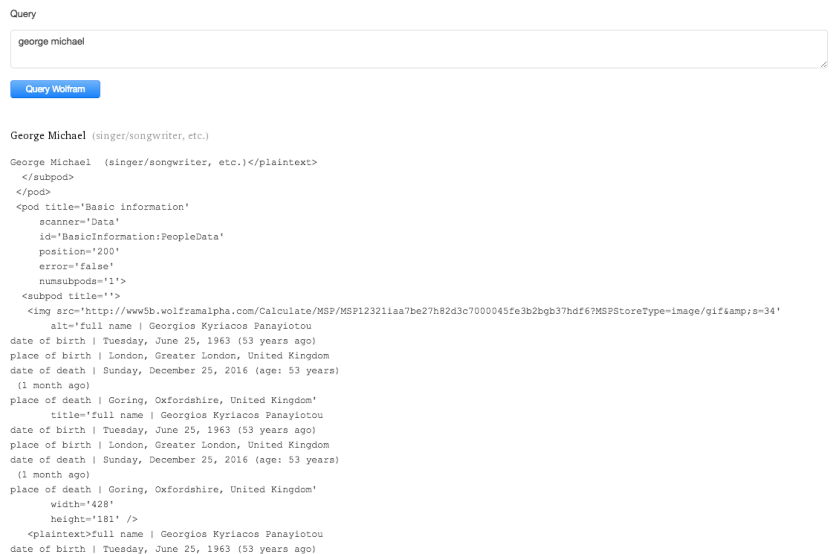

The result is displayed below. Notice how it’s a whole bunch of information. The image is in there, but so is a lot of other information we could leverage. But that’s for later on.

Small note: getting the information back from Wolfram Alpha took quite some seconds. That might prove an issue in the future, but for now, let’s be happy with what we’ve got.

The next step will be extracting the image from this information. Keep you posted on that one.